A PUBLIC WORLDCOMPUTER

Create apps, websites and enterprise services on an open tech stack. Simply chat to AI to create 10,000x faster (try caffeine.ai), or write code (try icp.ninja). Online services run on a public cloud that's a decentralized network, powered by a mathematically secure network protocol that grants unprecedented levels of security and resilience, while enabling integration with multi-chain Web3 functionalities.

Create successful apps and websites through chat — on a safe open tech stack for Al that rolls back limits

Try it now

Make AI immune to cyber attacks

Decentralize Al to make it tamperproof and unstoppable, and autonomous if needed

Find out more

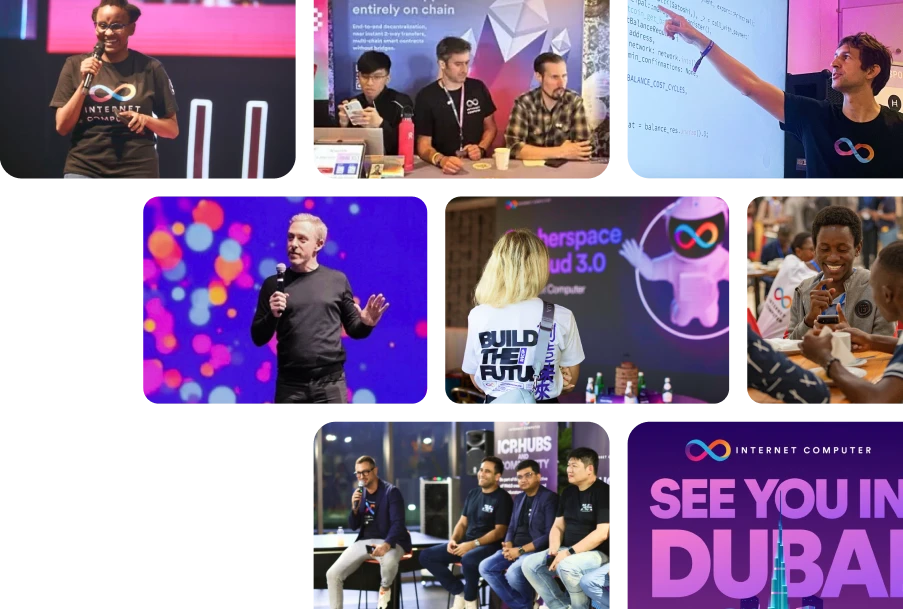

Real World Use Cases

Experience full stack decentralization: from DAOs and crypto cloud services to games, NFTs, and social media, the Internet Computer has something for everyone.

See for yourself

ICP ROADMAP

Explore the ICP Roadmap, focussing on contributions by the DFINITY Foundation. The roadmap is split into nine themes, each highlighting upcoming milestones.

get into it